Governing AI for Children: A Science Diplomacy Imperative

Blind Spot in Global AI Governance

At international meetings on AI safety and governance, the impact of AI on children and adolescents still sits at the margins, even though young people are the ones who will live through the consequences of today’s design and policy decisions. The iRAISE coalition, co-led by everyone.AI and the Paris Peace Forum, was created to close that gap by aligning developmental science, policy expertise, industry realities, and international dialogue so that child development becomes more actionable within global AI governance.

From Content Risks to Interaction Risks

Generative AI requires a shift in digital safety governance, which has long focused on more immediate and covert risks to minors online. LLM platforms do more than expose young users to content: they respond, adapt, and sustain exchanges over time, shaping the interaction itself. Language lowers barriers to information, rehearsal, and support, but when AI is designed to signal warmth, availability, and agreeableness, it can also reduce exposure to the friction and feedback that build judgment and independence.

Adolescence and the Social Pull of AI

The stakes are higher in adolescence, a sensitive period of brain development in which reward sensitivity matures ahead of impulse control and social cues carry particular weight. That sensitivity also makes adolescents more likely to be drawn to systems that feel responsive, affirming, and socially available. The expert consultations behind the iRAISE report make one point especially clear: adolescents will relate to these systems socially whether developers intend it or not, even when they know they are not talking to a person. This is precisely what raises the developmental stakes, because adolescence is also the period in which social skills should be strengthened through real-world friction, reciprocity, and repair.

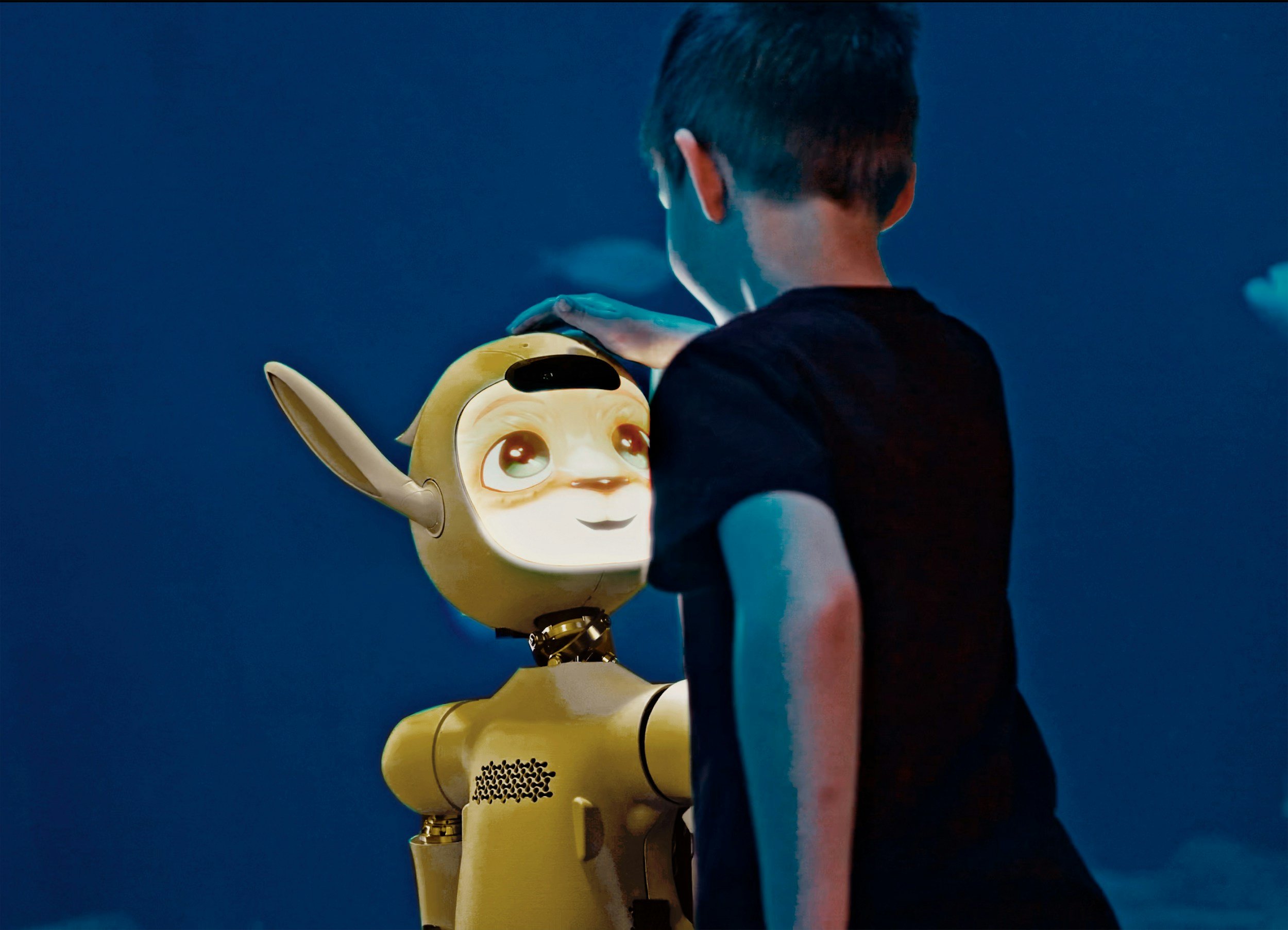

From Principles to Operational Standards

A simple disclosure that a system is artificial is therefore not enough if the interaction that follows uses emotional language, invented backstory, or relational cues that make it feel socially real. The issue is not only whether these behaviors are present, but how strongly they are expressed, how they combine, and at what point they shift a system from a bounded tool toward relationship-like dynamics. These are not binary features, but levers operating along a gradient. The iRAISE Lab, a two-day cross-sector workshop, was designed to translate that problem into an operational framework by identifying anthropomorphic, interactional, and relational cues that can be defined, compared, calibrated, and governed across systems.

Iterative Governance and the Role of Science Diplomacy

The empirical literature on long-term developmental effects is still too recent to offer the longitudinal clarity policymakers would ideally want, while deployment is already happening at scale. Governance therefore cannot wait for a mature evidence base before beginning to implement safety standards. Science diplomacy has a role to play here by creating the conditions for researchers, governments, industry, civil society, and young people to build provisional standards from current knowledge, test them across jurisdictions and cultural contexts, and revise them as the evidence develops. Under conditions of rapid deployment, iteration is not a compromise on rigor, but a more responsible way to govern.

References:

Mathilde Neugnot-Cerioli (2026). Adolescents & Anthropomorphic AI: Rethinking Design for Wellbeing An Evidence-Informed Synthesis for Youth Wellbeing and Safety. arXiv. https://doi.org/10.48550/arXiv.2603.06960

https://www.gov.uk/government/publications/online-safety-act-explainer/online-safety-act-explainer

OECD. (2025). How do people experience new technologies and perceive generative AI? https://doi.org/10.1787/49b8d10e-en